Always-on host

Use a Mac mini or desktop as the stable place where work runs.

Mac Mini AI Server

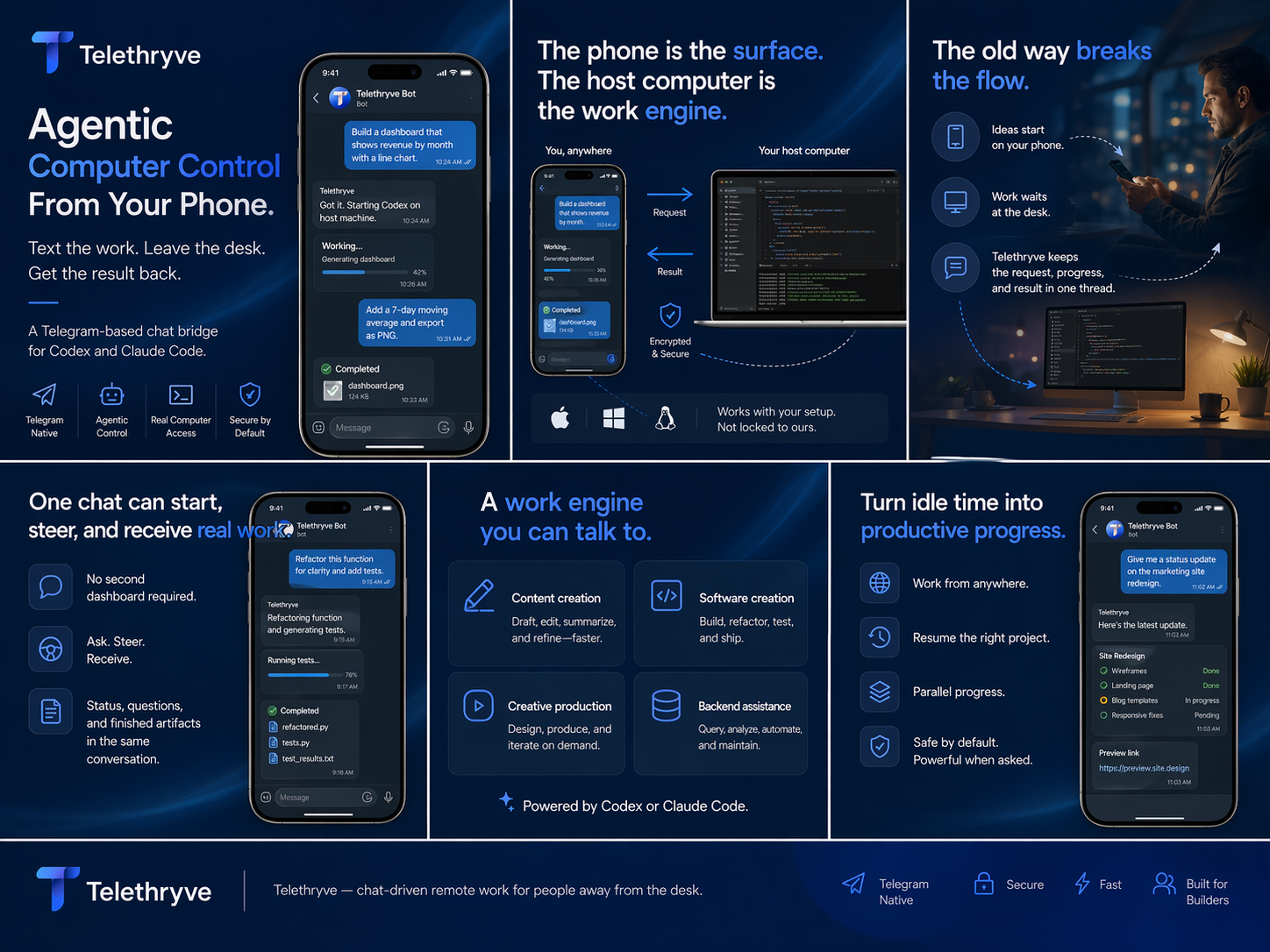

Telethryve positions a Mac mini, desktop, or always-on workstation as the place where mobile AI requests can use local files, local models, tools, and agent workflows.

Search Intent

A small dedicated machine can be more useful when it is easy to command from the phone. Telethryve turns that setup into a conversational AI workstation instead of a silent box on the network.

Why Telethryve

Each page gives search engines and launch visitors a specific reason to understand, share, and link to Telethryve.

Use a Mac mini or desktop as the stable place where work runs.

Pair local LLM, memory, retrieval, and tool execution with mobile command.

Start the request from the phone while the host machine does the heavy lifting.

Use Case

A small dedicated machine can be more useful when it is easy to command from the phone. Telethryve turns that setup into a conversational AI workstation instead of a silent box on the network.

Local LLM experiments, private document workflows, code tasks, home-office automation, creator pipelines, and founder operating systems.

FAQ

No. A Mac mini is one useful host shape, but Telethryve is about a workstation that can keep files, tools, models, and workflows available.

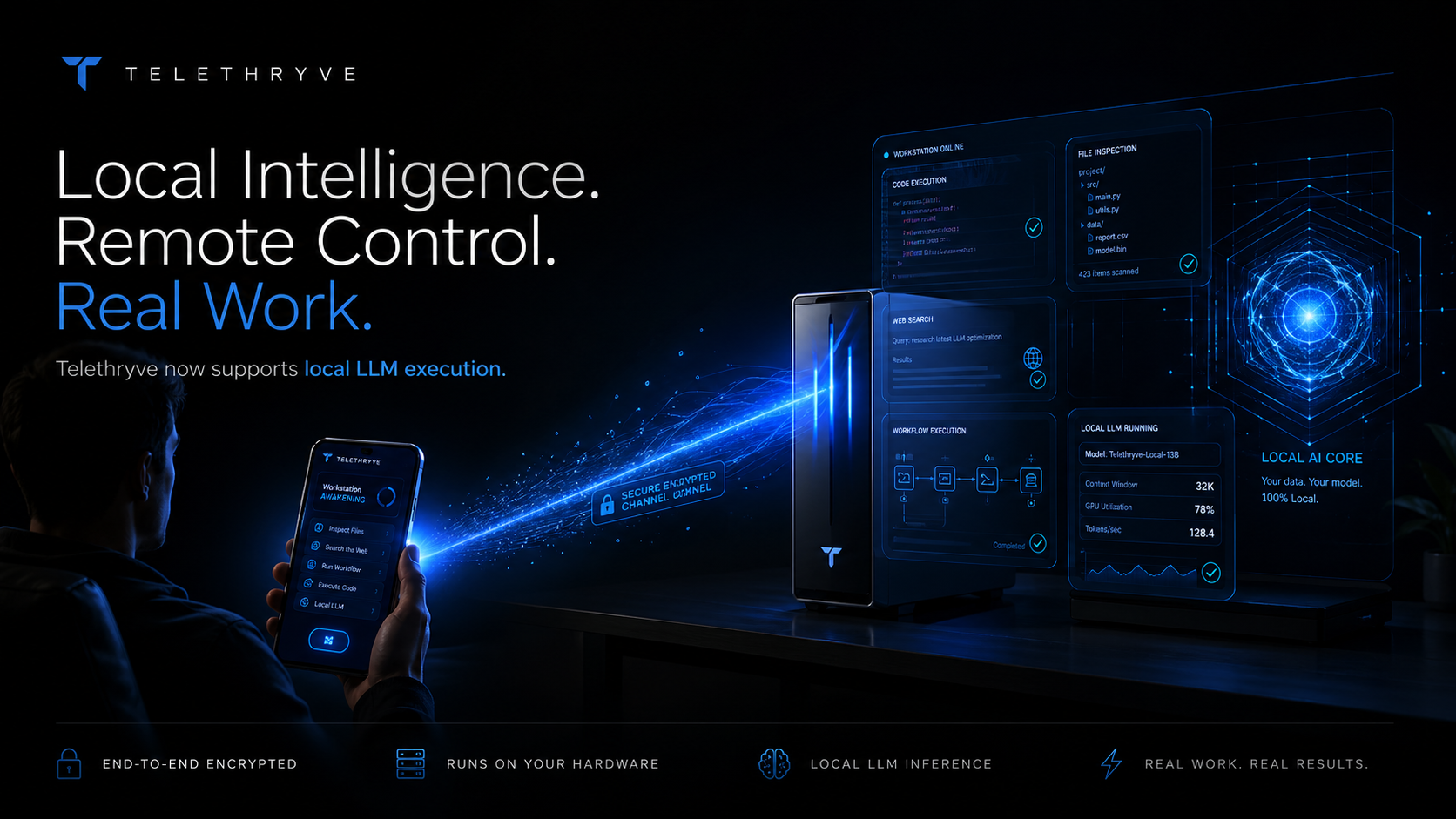

Yes. Telethryve's launch positioning includes local LLM, hybrid, and air-gapped workstation modes when configured.

Related Workflows

Telethryve turns a phone into the command surface for a real AI workstation, so files, tools, browsers, code, local models, and results stay connected to your own machine.

Telethryve supports local LLM and hybrid workstation workflows so sensitive context can stay closer to the machine where the work already lives.

Telethryve's air-gapped mode positions the workstation as a private AI environment for local LLM reasoning, memory, retrieval, files, and tool execution without cloud dependence.

Telethryve lets a phone dispatch Codex-style agent workflows to the workstation where repositories, files, tools, and project context already live.

Telethryve connects agentic AI workflows to the host workstation so tasks can use files, tools, browsers, code, local memory, and real project context.

Telethryve gives remote AI workstation searches a practical answer: send the instruction from a phone while the real computer keeps the files, tools, projects, models, and outputs.

Telethryve helps people who start with ChatGPT-style mobile prompts turn ideas into workstation tasks that can use local files, tools, agents, and project context.

Telethryve gives Codex-on-iPhone searches a practical mobile workflow: send a coding request from the phone while the workstation keeps the repository, terminal, tools, and tests.

Telethryve is a mobile command layer for Codex-style project work, helping a phone request become agentic coding, review, docs, tests, or release chores on the workstation.

Telethryve helps founders turn phone moments into real workstation tasks for product, code, content, research, launch, support, and operating workflows.

Download Telethryve, watch the product story, read the launch proof, and follow the build from mobile AI workstation to local LLM and air-gapped AI workflows.

Next Step

For launch, every high-intent page gives a more precise destination than the homepage alone.