Local model path

Use a local model when private context, offline access, or machine-local reasoning matters.

Local LLM Workstation

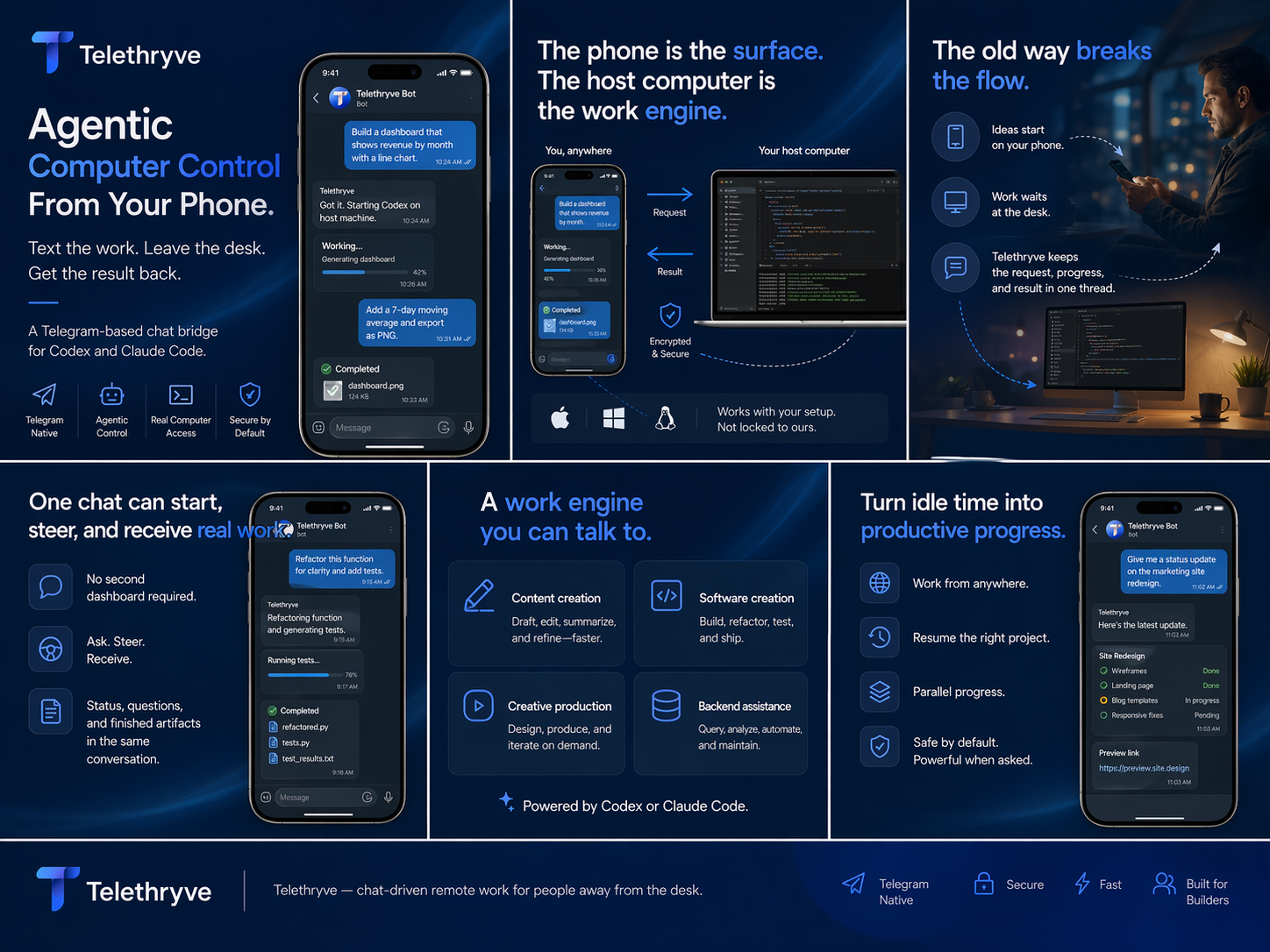

Telethryve supports local LLM and hybrid workstation workflows so sensitive context can stay closer to the machine where the work already lives.

Search Intent

A local LLM is more useful when it can sit near the actual working environment. Telethryve frames the host machine as the AI work surface, not just a chat window.

Why Telethryve

Each page gives search engines and launch visitors a specific reason to understand, share, and link to Telethryve.

Use a local model when private context, offline access, or machine-local reasoning matters.

Keep connected tools available while routing analysis or drafting through local intelligence.

Let local files, notes, code, and documents become the center of the work environment.

Use Case

A local LLM is more useful when it can sit near the actual working environment. Telethryve frames the host machine as the AI work surface, not just a chat window.

Privacy-sensitive research, document workflows, local coding, controlled experimentation, and teams that need an alternative to cloud-only AI paths.

FAQ

No. It can use local, hybrid, and hosted paths depending on the configured profile and task.

No. Local reasoning can help with documents, notes, summaries, creative production, and operations work too.

Next Step

For launch, every high-intent page gives a more precise destination than the homepage alone.